Interior Design: Music for the bionic ear.

Wednesday, 21 July 2010

Introduction to the project:

The residency comprises three months of research and development around the idea of composing music for cochlear implant wearers. The artist (Robin Fox…that’s me) is working closely in collaboration with Bionic Ear Institute research scientists Hamish Innes-Brown and Jeremy Marozeau to tailor music or more broadly ‘organize sound’ designed for reception via current implementations of cochlear implant sound processing and emitting software and hardware. The Bionic Ear Institute has a range of research focus areas; this residency is nested within the recently established Pitch Perception Project. The cochlear implant as it currently operates has had a great deal of success in restoring the ability to hear and understand speech in users. Appreciation of music, however, involves a much more complex and ‘broadband’ set of parameters, particularly in the spectrum of audible sounds necessary to appreciate changes in pitch and, even more so, sound timbre. The goal of the pitch perception project is to research and devise strategies for overcoming this limitation of the current software and hardware combinations. While some of these solutions may involve addressing hardware issues directly, another angle is to adapt music itself and design it for the hardware. This is where the composers come in.

Part of my residency will involve co-ordinating a concert of commissioned works composed with the reception via a cochlear implant in mind. The commissioning phase is underway with a combination of instrumental and electroacoustic works being developed by Natasha Anderson, Rohan Drape, Eugene Ughetti, James Rushford, Ben Harper and myself. Meetings between the group and scientists from the BEI will begin next week. Really looking forward to the discussions and issues that will be raised at this meeting. What follows is a diary style account of the residency to date. This will be updated from time to time over the coming months.

Week 1: Beginning 28th June

Monday: Reading, digesting literature on the implant and recent research into pitch perception/ Examining the possibility of Wave Field Synthesis as a possible sound processing option. The idea for pursuing a possible cochlear implant/wave field synthesis relationship was one of those weird moments of free association triggered by a physical similarity in the diffusion systems of the two mediated through recent readings around arthropod bio-acoustics. The cochlear implant itself (that is, the bit that is ‘implanted’ into the cochlear) is a tiny thin strip with 22 electrodes placed along it in a linear array. These 22 electrodes fire electrical signals directly at the auditory nerve, essentially replacing the function of the basilar membrane. It occurred to me that transcoding external sound into electrical impulses in this way and then sending those impulses over such a short distance (micrometers) seemed to be a translation from the compression and rarefaction of air (sound wave) to a displacement of particles. So really, the translation of phenomena that happens in air over a greater distance, to a signal that is only understood over a very short distance. This seemed to resonate with reading I was undertaking a while back on the sound generation and listening apparatus of various insects including the cicada. The line of 22 electrodes also brought to mind a relatively new form of synthesis known as wave field synthesis (used largely in the creation of virtual acoustic environments), which requires a linear array of loudspeakers to create wave fields that create the impression of a source sound. The technique rests on Huygen’s principle that ‘any wave front can be regarded as a superposition of elementary spherical waves. Therefore, any wave front can be synthesized from such elementary waves.’ (look up WFS on Wikipedia! This is a blog right? No need for hardcore academic referencing protocols!). These two poetic convergences got me thinking about possible ways to approach composition of sounds for the cochlear implant. More on the results later, as they arrive, or don’t as the case may be.

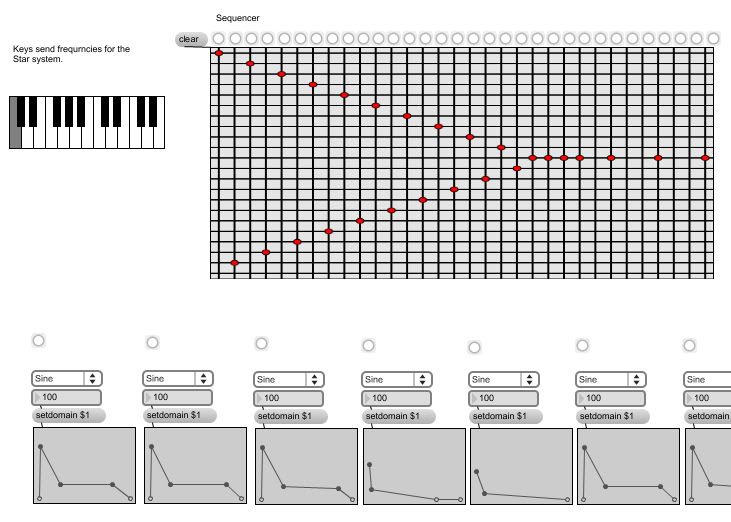

Tuesday: Built a ‘Cochlear Synthesizer’ in Max/MSP. A simple synthesizer and 32 step sequencer built with the cochlear implant frequencies as a starting point. That is, the synthesizer has 22 ‘tones’ each one tuned to address the centre frequencies of the filters that the cochlear implant hardware uses to prepare incoming sound for resynthesis by the electrode array. This simple tool allowed me to begin to formulate ideas for short studies designed for testing and radio demonstrations etc. Wednesday: Meetings at the BEI. Discussions with Hamish, Jeremy, Phoebe Faulkner (a third year sound student from RMIT currently conducting an internship with me) and Daniela, who wears two cochlear implants, about the experience of receiving the implants and the subsequent experience of listening with the implants. These discussions were fascinating. Daniela began to go deaf at 27 years of age due to complications arising from an infection. She now uses two cochlear implants simultaneously (one for each ear of course) and is a remarkable case as she has a particularly strong ability to distinguish pitches that are quite close together, something that, the research has shown, is very difficult for most implant wearers. There may be a number of reasons for this. The fact that Daniela was a keen amateur musician before her hearing loss and the fact that her auditory nerve had been fully active for 27 years prior to her hearing loss. In today’s climate of discussions around neuro-plasticity and the changing ways in which we are understanding things like perception, it could be argued that Daniela has performed some kind of ‘re-mapping’ that allows her to appreciate subtleties in music more quickly than others who may have to re-enervate the nerves and neurological responses to nerve activity. What is remarkable to me is that the different experience of each cochlear implant recipient seems to mirror the subjectivity inherent in the appreciation of music generally. I have always questioned whether we hear the same things as individual appreciators of music and how much of what we hear is culturally coded or based on repetition and strengthening of certain synaptic pathways and complex associations. This accounts for the lack of any real language to describe music other than the description of structural detail (itself an autonomous pole of discourse and wildly boring to anyone not familiar with it…..) or an endless array of meaningless adjectives or references to things outside of music. If we are indeed Pavlovian listeners then there may yet be hope for coaxing associations back into play in cochlear implant recipients. The results may not be music as we know it now but may well be a brave new solfege!

Was taken on a fascinating tour of the wet-labs at St.Vincents hospital where I saw the recent developments in the bionic eye project as well as the engineering labs where the test versions of new models are made. Finally we returned to the BEI for a media interview and photo shoot with the Age Newspaper.

Week 2: Beginning 5th July

Monday: Painful day working on the simulator in max/MSP. Still trying to work out the finer points of this system so the day was spent trying to organize data in sensible ways. The simulator will be an important tool in the composition of works for the cochlear implant, giving the composers an audible reference for what the electrodes inside the implant are doing.

Tuesday: Met with the staff of Arts Victoria and the Victorian Arts Minister Peter Bachelor at a function in Footscray congratulating grant recipients from the previous funding round. The BEI was successful in securing funds for the presentation of the Interior Design concert of commissioned musical works for the cochlear implant.

Wednesday: More work with the synthesizer and simulator. Meeting with Eliot Gyger, a musicologist from Melbourne University interested in documenting the composition and performance of the musical works. The interesting thing to come out of this conversation for me was the fact that this composition project changes the rules, and particularly the rules governing the romantic conception of composition, namely one man’s (and I use the gender intentionally here) struggle with his inner vision (always genius of course) and his heroic attempts to force this incredible revelation out of his tortured mind and convey it to a moronic public hungry for a heightened sense of their own cultivation. Thankfully, I subscribe to a very different view of creation. This project starts with the premise that anything happening in my mind musically is irrelevant and that the focus must be on new methods and new ways of approaching the organisation of information and therefore on the creation of new music. It has always been my goal to make sound and music that was in some way impossible to even imagine before it was created, because the process by which it was created hadn’t been attempted or tested before.

Thursday: Meetings with Arts Access about securing a venue for the forthcoming Interior Design concert. Meeting conducted with Rob Gebert (Program Manager, Community and Contemporary Cultures) from the Victorian Arts Centre. Discussions centred around gaining access to the Fairfax studio theatre over two nights for the presentations. These discussions were very positive.

Friday: Move towards thinking about how to address this music to cochlear implant wearers. After two weeks of research it seems that the window of possibility is shrinking rather than getting bigger. The best indicator of what is actually going on in the transmission of information from the electrode array to the auditory nerve is a visual graphing tool called the electrodometer. This machine allows you to play sound directly into an implant (though it’s not implanted of course) and generate a visualization of the electrode activity in graph form. Running some preliminary tests with this device demonstrated that the simple cochlear synthesizer that I built in Max/MSP is successful in addressing individual electrodes. Simple fanning rhythmic patterns alternating between high and low frequencies tending toward the centre of the spectrum were replicated well across the electrodes.

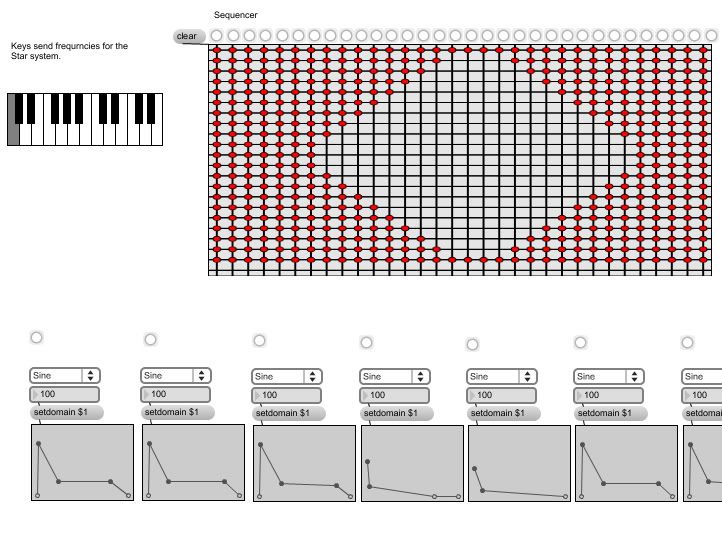

Other patterns employing a cumulative polyphony led, quite predictably, to overlapping information being fired by multiple electrodes. The example below shows a sequence that moves from total tone density to a sparse high/low frequency separation in the middle of the pattern and back to density. The corresponding graph shows this move from spectral density to clarity in the information generated across the electrodes really well. It is this difference, it seems, that will form the basis of a new solfege for the composition of musical information. In many ways, there is a real poetic connection between working in this way and Edgard Varese’s observation in the mid 20th century that music is no more than ‘organized sound.’ Here, as a composer, one is confronted with a situation where the only reliable parametric availability is the organisation of the density or scarcity of electrical stimulation across an electrode array. By organizing this ‘difference’ the composer can reliably effect the sensation of ‘change’ in the listener.

Corresponding images from the electrodeogram and example soundbites to come!

No. 1 — September 14th, 2010 at 4:45 pm

Incredible ! That ended up being one Excellent Piece of writing ! Thank you So Much, As i just Saved as a favorite your internet site, Wish that you’ll come up with more stuff just like this.

No. 2 — September 22nd, 2010 at 1:26 am

The layout for your website is a bit off in Epiphany. Nonetheless I like your blog. I may have to install a “normal” browser just to enjoy it. 🙂